In my previous post XSS Part 2 Attack and Defence we looked at how an attacker can use XSS to steal a users session token from a cookie and then hijack it.

We then enabled the HTTPOnly option on our session cookie preventing malicious JavaScript from accessing the cookie value and sending it to the attacker.

Whilst HTTPOnly option will prevent this scenario unfortunately there is a another we need to address.

Obligatory Warning

Before we go any further obligatory disclaimer/warning/nag – do not perform any of the attacks we’ll discuss on sites you do not have permission to otherwise you could get into a lot of trouble or even jail.

A much better option to explore this topic is to use something like my deliberately vulnerable application https://github.com/alexmackey/hackthecat or HackTheBox.

Back to the content..

Ok where we?

Ah yes so the big issue we need to address occurs because if an attacker can execute code on a web page then it will operate as if it was legitimate code written by the site author with the same access to cookies, local and session storage.

Now browsers under most circumstances will automatically send cookies set by the site the user is browsing with any requests made via XHR & fetch requests (note that fetch requests require this to be explicitly enabled).

This can be a big issue when an XSS problem exists as because if a cookie value is used to identify a user (like in our example) and an attacker can get code to run on a page then they can call any API endpoint in the context of the logged in user!

Uh oh..

An attacker could then use this to:

- Hit an API to change the user’s password to a known value (we’ll do this in a minute!)

- Perform any valid action the user can – maybe this is transferring money in a banking application or purchasing products and sending them to the attacker or maybe exploiting some dumb Web3 NFT thing..

- Perform additional XSS attacks but as the user themselves e.g. messaging other users XSS nastyness

- ..and a thousand other horrendous things!

So how does this work?

Creating this attack is trivial and any web developer will have written similar (but probably better) code for completely legitimate purposes.

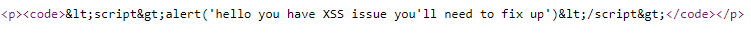

Below is an example of code that in the hackthecat application will change the password of the logged in user.

If we can get this to run in a page the user viewing it will have their password changed!

<script>

var formData = new FormData();

formData.append("username", "user");

formData.append("newPassword", "changed!");

formData.append("confirmNewPassword", "changed!");

var request = new XMLHttpRequest();

request.open("POST", "/user/change-password");

request.send(formData);

</script>

You can of course write this using fetch as well just remember you’ll need to use the credentials option set to “include” or “same-origin” otherwise fetch wont send credentials/cookies.

To see this in action yourself simply log into the hack the cat application (user and pass will do), go to a product and add a review with the above code.

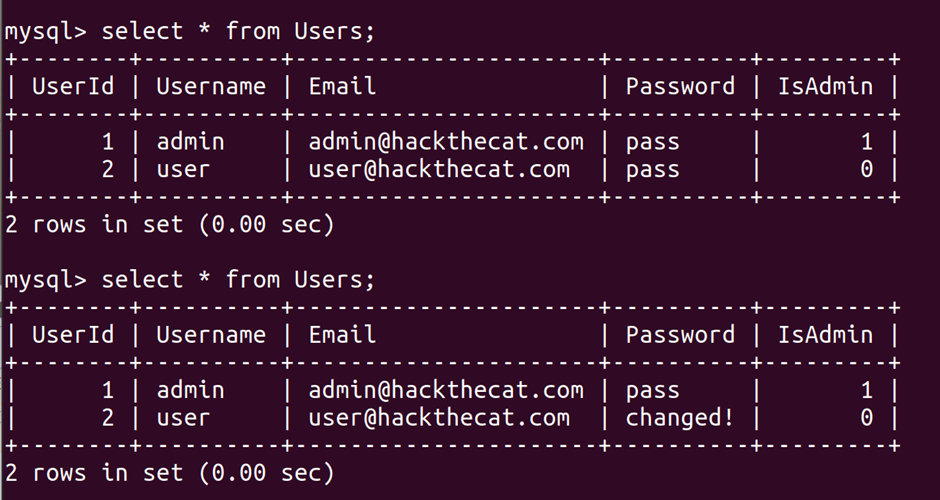

When this runs you’ll then find this has changed the users password – here’s an exciting before and after mysql query screenshot – note how the password is “changed!” in the second query:

Local/Session Storage and Session Tokens

Some sites use local or session storage to save a users session token and then append it to requests rather than storing tokens in a cookie.

I think generally as the XSS code is executing in the context of the user this approach is unlikely to provide any additional protection. In fact it could make things worse.

I’m of two minds about storing session tokens in local storage. Whilst you are arguably in trouble if an XSS issue exists (due to the attack type we are currently discussing) you lose the benefit of how cookies work and expire and additional protection such as HttpOnly or SameSite options that are baked into modern browsers.

CSRF (Cross Site Request Forgery) Tokens

Some of you might be thinking oh this is where CRSF tokens come in – they’ll save me!

CSRF tokens are intended for preventing a different issue (you can read more about them here: https://portswigger.net/web-security/csrf/tokens).

CSRF tokens are likely implemented as a special value stored in a hidden form field that is sent with any requests and compared server side.

To circumvent this protection will require the attacker to read a value of a hidden form field which er really isn’t too tricky so in respect to this attack doesn’t really provide any protection (except against some very lazy attackers).

You should still however use CSRF tokens as they will of course offer protection against CSRF attacks – exactly what they were intended for.

What can we do to prevent this attack?

Apart from preventing XSS issues in the first place probably the most effective protection against this particular attack is to ensure that critical actions such as changing a password enforce the user to provide their original password first as hopefully the attacker and script will not have access to this.

I guess you could also implement a CAPTCHA but no-one wants to count how many Volvo’s are in a crappy picture displayed in a 16×16 grid – no they really don’t – do better.

We are however going to make the attackers life a lot harder by using Content Security Policy (CSP to its friends).

CSP headers (Content Security Policy)

CSP policies allow the client application fine grained control of what should and should not be running on a page and from where.

CSP policies are supported in every major browser and in a limited form in IE10+ (which hopefully you don’t have to support).

CSP policies can be set either in HTTP headers or meta tags and look something like this:

Content-Security-Policy: script-src https://simpleisbest.co.uk/The above policy says scripts can only run from simpleisbest.co.uk and some other behaviours I’ll discuss shortly.

Below is the meta tag equivalent:

<meta http-equiv=”Content-Security-Policy” content=”script-src https://simpleisbest.co.uk‘ ‘self’;”>

What can you do with CSP policies?

Let’s say we have set the following CSP header on my site simpleisbest.co.uk:

Content-Security-Policy: script-src https://simpleisbest.co.uk/

Adding this header to HTTP requests will do the following:

- Any inline scripts will be blocked (unless explicitly enabled with ‘unsafe-inline’ option) – this would prevent the XSS attack we have been discussing!

- Inline event handlers e.g. onclick=”doSomething()” will be blocked preventing another attack vector

- If the attacker tried to load a script from anywhere but simpleisbest.co.uk it’s going to be blocked

- Several script execution methods such as eval(), Function, setTimeout, setInterval, setImmediate are blocked unless specifically enabled with unsafe-eval option

- It prevents an attacker sending data to another server with html forms

Now the chances are when you first implement CSP policies especially on a site that has been around for a while some of these settings will break the site – you’ll find all sorts of dubious approaches in your code and perhaps third-party libraries doing some weird stuff!

Whilst you are first implementing CSP policies I suggest instead of using “Content-Security-Policy” I suggest you use “Content-Security-Policy-Report-Only” – you’ll see stuff that CSP would have stopped in the browser console instead of it being blocked making it easier to fix it up.

Enabling specific inline scripts

If for some reason you wanted to enable specific inline scripts (really?) but benefit from the protections CSP offers you can do this by adding a nonce to your inline script and then specifying the nonce to be expected like so:

Content-Security-Policy: script-src 'nonce-test'

<script nonce="test">

…

</script>

Ensure only specific scripts can be loaded

CSP policies allow us to ensure that only specific versions of scripts can be loaded by specifying an expected hash of the script like below (remember to include script tags, whitespace and that this is all case sensitive):

Content-Security-Policy: script-src 'sha256-yourSha256hashhere'This is probably a good idea if you are referencing any scripts especially those on third party servers. This way you can be sure what your users will get is exactly as expected!

The downside of course is that its going to require some maintenance when scripts and assets are updated but it’s a small price to pay.

Which policies should I use?

CSP policies offer a huge number of settings to tweak.

Ideally, you want to only allow the minimum necessary for your site to function – this will reduce the surface attack area of your site and make the attackers life really hard if you can limit what options they have to utilise.

OWASP list the following CSP policy on their cheat sheet page (https://cheatsheetseries.owasp.org/cheatsheets/Content_Security_Policy_Cheat_Sheet.html)

Content-Security-Policy: default-src 'none'; script-src 'self'; connect-src 'self'; img-src 'self'; style-src 'self'; frame-ancestors 'self'; form-action 'self';This will do the following:

- All resources must be in same domain as document

- No inline script, evals for script and style

- No need for other sites to frame

- No form submissions to external sites

- Prevents loading of non ajax and CSS resources

This seems a pretty good base to start from and adapt to your purposes.

Adding a CSP Policy to HackTheCat

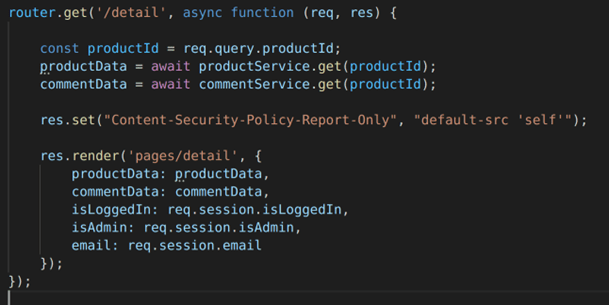

Ok let’s add a simple CSP policy to hackthecat site so we can see the impact.

We’ll do this directly in homeRoutes.js.

Add the following line before the res.render call in homeRoutes.js:

res.set("Content-Security-Policy-Report-Only", "default-src 'self'");

Note really you want this header to apply to every response so this isn’t the best way to do this as it will only apply to this endpoint. Also note that there are some third party express libraries to do this.

Once you’ve added this, restart the app, navigate to the page and you will then see something like the following in the browser console showing the inline script has been blocked:

Changing this to “Content-Security-Policy” mode will also display information about the referenced stylesheet hosted on fonts.googleapis.com being blocked as we haven’t said this is OK in our policy:

Deprecated approaches

You may come across a reference to various other XSS protection headers/options such as

- X-XSS-Protection

- X-Content-Security-Policy

- X-Webkit-CSP

- X-FrameOptions

These are all now deprecated and superseded by CSP Policies (for X-FrameOptions you can use frame-ancestors to provide protection against frame based evilness).

Using these legacy approaches can even cause security issues so don’t use them.

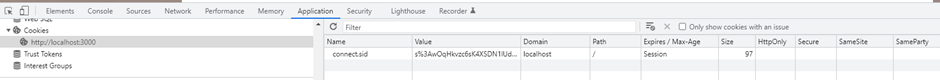

SameSite and SameParty

Before we wrap up this post I just wanted to tie up a loose end from the last post on SameSite and SameParty cookie options that you may have seen in the browser dev tools:

SameParty is a proposal designed to deal with the issue that modern sites are often served over multiple domains owned by the same entity. The intention is to allow developers more control over the privacy boundaries of where cookies are shared.

As this is still a proposal I’m not going to worry too much about it at this stage but its an interesting idea. You can read more about this proposal here: https://chromestatus.com/feature/5280634094223360.

SameSite however is ready to use now and supported in all major browsers apart from our old friend IE11.

Same-site is designed to protect against CSRF and potential information leakage by allowing developers to control that it should only be sent from requests initiated by the domain it was created in.

This is different to the default cookie behaviour where cookies are automatically sent to the same domains that they were set in.

Same site has 4 main options:

- Strict

- Lax

- None

- Unspecified (behaves the same as lax)

What do these SameSite options do?

Let’s assume a scenario where:

The user has a cookie set by my domain simpleisbest.co.uk

The user is on an external blog site hosted at wordpress.com

Strict

If the cookie had SameSite set to strict then the following will occur:

- If the blog site references an image on simpleisbest.co.uk then no cookies would be sent to simpleisbest.co.uk from this request

- If the user were to follow a link from wordpress to simpleisbest.co.uk then on the first request they make no cookies will not be sent offering protection against CSRF attacks that rely on the user being logged in and clicking a malicious link

- Once the user is on the site e.g. they click a link on simpleisbest.co.uk then cookies will be sent as they are now in a first-party context (e.g. on the site that set the cookie).

Lax

When SameSite is set to lax then:

- If the blog site was referencing an image and requested an image from simpleIsBest.co.uk then no cookies would be sent as per strict mode above

- Unlike strict mode however if the user follows a link to simpleIsBest.co.uk then cookies will be sent.

None

When SameSite is set to None:

- If the blog site was referencing an image on simpleIsBest.co.uk then cookies would be sent with the request

- If the user follows a link from another site to the site that set the cookie (simpleIsBest.co.uk) then cookies will be sent

- Note you must set the secure attribute on cookies when using None

Unspecified

Unspecified now behaves the same as Lax (recent change).

Ok let’s wrap things up – in this post we looked at how XSS can without further protections in place allow us to execute actions in the context of the user such as change their password or call arbitrary endpoints via XHR or fetch.

We then looked at how CSP policies provide fine grained control of what functionality can run in a page and can prevent or at least make an attackers life a lot harder then finally we looked at SameSite and SameParty cookie options.

In my next post I’ll show how an XSS issue can be exploited to run arbitrary code on a webserver which is pretty much as bad as things can get!