I’ve been interviewing web developers recently and was surprised that the majority did not know what XSS (Cross Site Scripting) is.

Perhaps part of this is that many web developers will begin their development career using a framework such as React or Angular that abstracts what’s actually going on.

Many frameworks will now also provide sensible defaults that can help prevent many XSS issues and you have to go out of your way to get round these for example React warns you that you are probably about to do something potentially silly with its wonderfully named dangerouslySetInnerHTML method.

However its still very possible despite some of these protections and defaults to have XSS issues so if you are developing for the web it’s important you understand what XSS is and how to prevent it.

XSS is a surprisingly big topic (and I certainly learnt stuff putting this series of blog posts together) and we’ll be going deep into this topic so I’m going to split this up into what’s hopefully a series of more digestible articles rather than a 30,000 word mega post.

In this series of posts, I will:

- Introduce XSS and talk about its different types

- Talk about what an attacker could do with XSS

- Show an example using my demo app of how XSS could lead to compromised accounts and even RCE (Remote Code Execution) when combined with other issues

- Look at ways to mitigate or prevent XSS

- Review some of the thousands of ways XSS can occur and how attackers circumvent various protective measures and what to do about it

Important!

As with any offensive security topics its important you do not use these methods and techniques on sites you do not own or have permission to test on.

Trying some of the techniques we’ll discuss on sites you don’t have permission to is almost certainly illegal and you could end up in a lot of trouble or even jail.

There’s plenty of great labs to explore security topics such as hackthebox, tryhackme or bug bounty programs where you can learn and help make stuff safer which makes the world a better place.

These are all much better options than a criminal record/jail time..

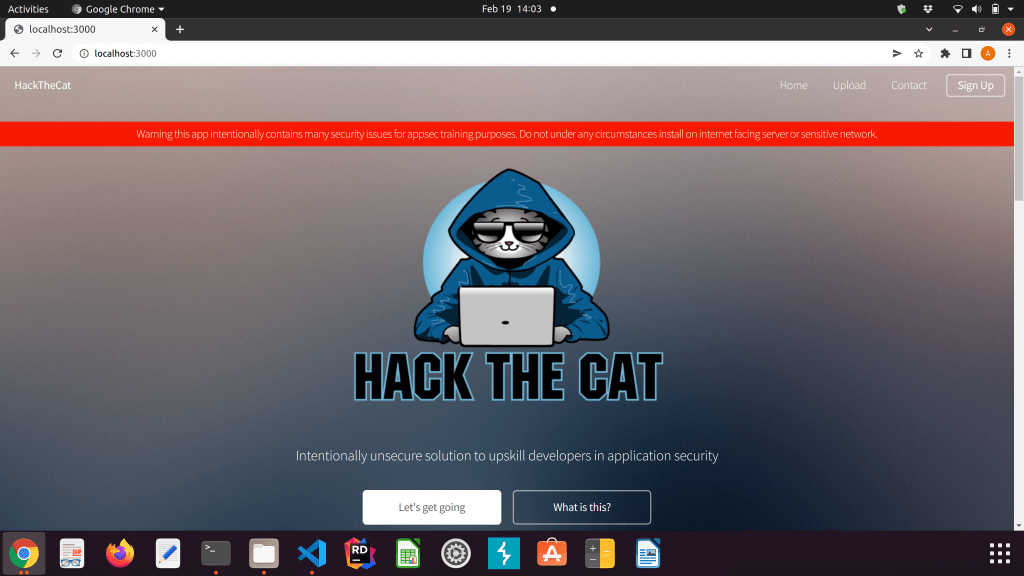

Hack The Cat (vulnerable Node/Express application for learning/teaching appsec)

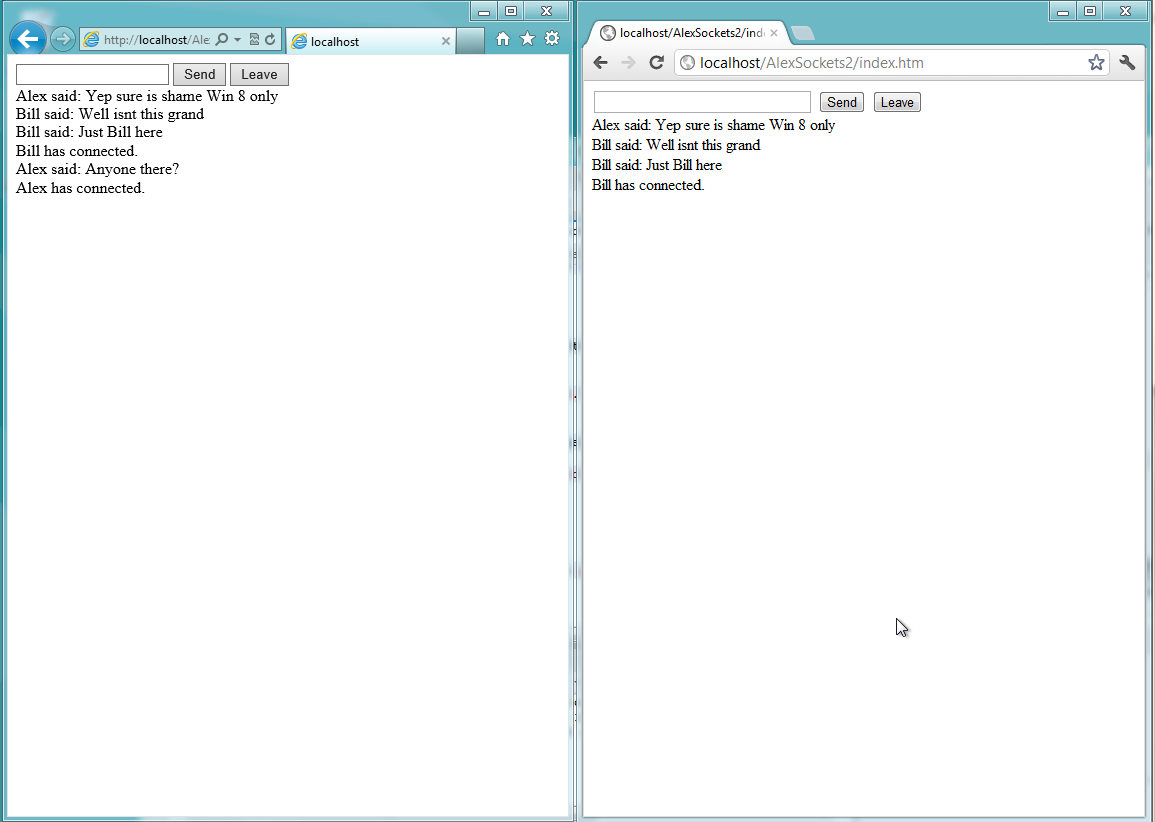

I wanted an application to play, learn and teach some of these issues so I’ve been developing a deliberately vulnerable Node/Express application called Hack The Cat that contains many different security issues (below):

Whilst early in its development I’ve added various common security issues including:

- Various XSS vulnerabilities

- SQL Injection

- Serialization issue

- File upload with naïve filter

My plan is to expand upon this and also add the following:

- SSTI (Server Side Template Injection)

- More advanced XSS filters

- LFI & RFI (Local/remote File Inclusion)

- OS command injection

- JWT issues

Anyway i’ll release this shortly with a MIT license so its free for all but let’s talk about XSS.

What exactly is XSS?

XSS or Cross Site Scripting is one of the most common web security issues and occurs when a website accepts malicious client-side code or HTML and then executes it in the context of the user who is visiting the site.

A simple example of XSS would be a shopping site that allows users to post reviews of products and then renders the reviews submitted.

If an attacker can submit a comment containing a script block like say below..

<script>alert('hello you have XSS issue you'll need to fix up')</script>

..and the browser renders it without encoding this then you’ll be greeted with an annoying smug alert box.

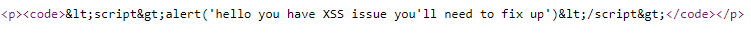

In fact if right click/view page source on this blog post and search for “hello” in the source code you’ll see something like below:

Here, WordPress has thankfully properly encoded this code block – if they didn’t you’d all be seeing an alert box as it would run as if the original site developer had added it.

If you want to see just how common XSS issues are and how they can be used go to https://www.exploit-db.com/ (a site that contains a massive index of security issues and code for utilizing them) and search for “xss” – wow quite a few issues..

XSS was apparently “discovered” by the Microsoft Security Response Centre and the IE Security team who heard about attacks where script and image tags were injected into web pages. In 2000 they published a report in conjunction with CERT where the term Cross Site Scripting was first used.

Whilst popping up an alert box and taking your users back to early 2000’s web/common current debugging techniques is certainly annoying an attacker could do much worse and this can be used to devastating affect.

For this we need to get into cookies and sessions.

Cookies and Sessions

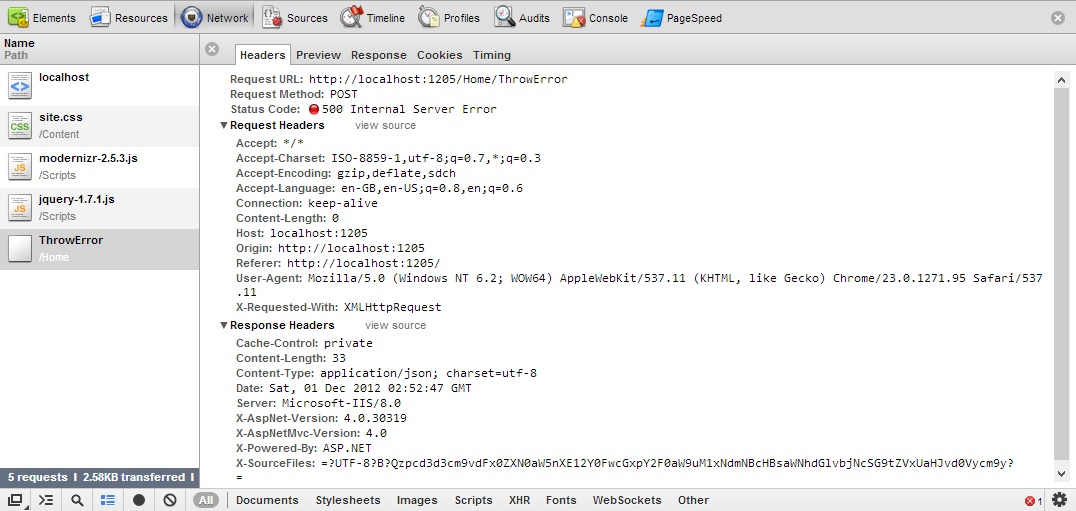

XSS can become especially destructive due to how web site sessions & cookies work.

Cookies are pretty important and one way of holding state about a visitor. Cookies are one of the main mechanisms websites use to identify individual user on a website and understand if a user is logged in.

When I log into many sites the site will issue me a cookie to identify me and that I should be able to access the site.

Now the thing about cookies is that they are sent with any request made from a site or “origin” (there are a few exceptions such as using fetch which doesnt send cookies by default and certain configurations we’ll talk about later).

Now this can be a big issue as if an attacker can get code to run on a site as we know that cookies are being used to identify users then the site may think the request is from a legitimate user!

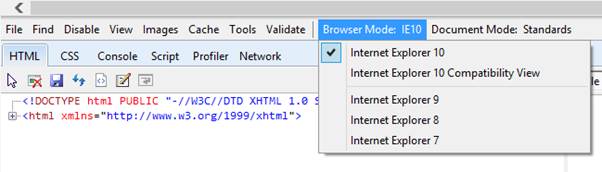

Same-origin policy

Before we move on I just wanted to mention same-origin policy as this relates to cookies and will also prevent or make harder some types of attack.

This can get quite complex so here’s an overly simplified version.

Same-origin policy places restrictions on what a document or script can do when a page is loaded from one origin and interacting with another.

A site is considered as having the same origin if the following match:

- Its protocol (http/https)

- Port (if specified – note weirdly IE ignores the port bit because er IE)

- host (the address bit – it’s more complex than this but anyway)

The following for example would be considered to have the same origin:

https://simpleisbest.co.uk/some-page

Now this is important as if an attacker is running a script on another server (origin) then they wont be able to do certain things (at least directly) like steal cookies. This is a good thing.

Ok enough theory lets talk about different types of XSS.

Different Types of XSS

XSS comes in several different and overlapping ugly flavours you should be aware of:

- Stored XSS

- Blind XSS

- Reflected XSS

- DOM Based XSS

Persistent/Stored XSS

In Stored XSS the attacker manages to save their horrible XSS payload somewhere (most likely a database as they are good for this kind of thing unlike er blockchain which really isnt very good at anything).

The bad news with Persistent/Stored XSS is that once malicious code is stored it could potentially be executed for thousands of users who don’t have to do anything but visit your site where the code is rendered which can give it a massive reach.

I guess you’d also have to clean up the stored malicious content at some point in your database which could be really annoying if an attacker automated the creation of thousands of comments.

Blind XSS

Blind XSS is a form of Persistent/Stored XSS but has the difference that the attacker is not sure when the code will actually execute and if at all.

Er what?

Imagine an application that allows the sending of a contact form message to admin users.

When an admin user views this message then the malicious code will then execute in the context of the admin user. This malicious code could then be used potentially to perform administrative functions, steal the admin’s session cookie etc as its executing in the context of the admin user.

In Blind XSS the attacker may not have access to the code base/application so they cant be sure their code will ever actually execute.

An attacker might send code that will “ping” a remote server or endpoint by perhaps requested an image and then monitor to see if a blind xss attack was successful. They could then perhaps send more information about the page its executing on.

Reflected XSS

Reflected XSS occurs when the XSS payload is not actually stored but is “reflected” back to the user.

A site may have a search function that allows users to search for products and then sends the search values unencoded in the query string e.g. simpleisbest.co.uk?search=<script>something bad</script>

Attackers could potentially send users links like the above that are pointing at a legitimate site that then executes their malicious code.

An attacker would likely take care to obscure their payload more carefully than the above example and this could be obscured with various methods e.g. JavaScript’s String.fromCharCode method and a hellish number of different encoding schemes that most browsers support.

It’s important to note that reflected XSS requires a user to perform an action (e.g. click the link) and may be avoided by more knowledgeable users or automated systems that scan emails for malicious content.

Reflected XSS attacks are likely to have limited reach compared to persistent/stored XSS.

DOM (Document Object Model) based XSS

In DOM based XSS the attacker finds a way to inject a payload into the pages DOM without modifying the HTML directly.

A site may after login put the users name in a query string to display a welcome message that is then output on a page e.g. simpleisbest.co.uk?username=Alex

If the receiving code doesn’t encode the output properly then an attacker could supply a malicious bit of code in the username parameter that would then be output and executed on a page.

DOM based XSS can be harder to find/scan for as the attacks never reach the server itself.

So what’s the big deal about XSS?

Whilst using XSS to pop up an alert box or maliciously redirecting users to Michael Buble’s site could be very annoying XSS can be devastating when chained with other attacks.

Depending on other protections & configuration in place we’ll talk about in the future XSS can be used to do pretty much anything a user on that page could do as code will execute in the context of that users browser.

This means of course that the code can:

- Transverse and manipulate the pages DOM

- Read users cookies, local and session storage (depending on other protections in place)

- Send requests to backend APIs in the context of the current user even if not used on the current page

- Redirect the user to other sites/applications

- Modern APIs have access to the camera, geolocation, microphone and certain files (these will require user to grant permission if they haven’t already)

And any other endless possibilities – basically anything you could do with client side code in the context of the current user

This means an attacker could:

- Harvest and steal session cookies (depending on other protections in place) allowing them to act as if they are the user logged in until the cookie/token expires or is revoked

- Perform actions in the context of the current user as the browser will send cookies with any requests made e.g. change their password to something known

- Transverse & manipulate the DOM to pull out sensitive information

- Manipulate the DOM and trick users into performing certain actions or reading wrong information

- Redirect the user to other sites e.g. a site hosting malware or Michael Buble’s music

- Defacing a site and replacing it with other content (hopefully not Mr Buble’s music)

Ok so hopefully I’ve convinced you XSS can be a pretty big deal.

In the next post i’ll show an example with my demo application of how it could be used to gain control of an admin account and even an entire server and then we’ll look at how we can fix this up.

Further reading